Applies To:

Show Versions

BIG-IP AAM

- 11.6.5, 11.6.4, 11.6.3, 11.6.2, 11.6.1

BIG-IP APM

- 11.6.5, 11.6.4, 11.6.3, 11.6.2, 11.6.1

BIG-IP GTM

- 11.6.5, 11.6.4, 11.6.3, 11.6.2, 11.6.1

BIG-IP Link Controller

- 11.6.5, 11.6.4, 11.6.3, 11.6.2, 11.6.1

BIG-IP LTM

- 11.6.5, 11.6.4, 11.6.3, 11.6.2, 11.6.1

BIG-IP AFM

- 11.6.5, 11.6.4, 11.6.3, 11.6.2, 11.6.1

BIG-IP PEM

- 11.6.5, 11.6.4, 11.6.3, 11.6.2, 11.6.1

BIG-IP ASM

- 11.6.5, 11.6.4, 11.6.3, 11.6.2, 11.6.1

Using a SNAT pool

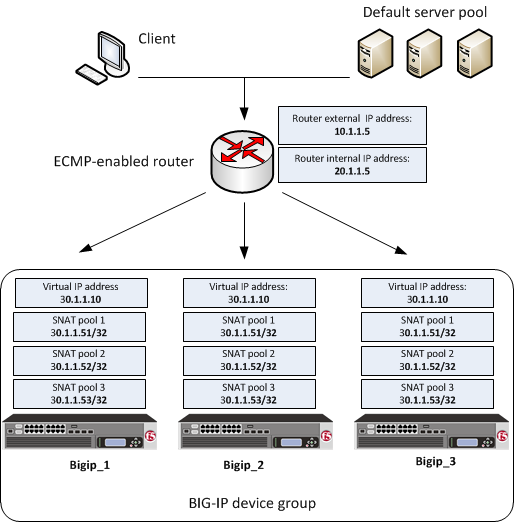

One of the ways that you can set up all-active clustering of BIG-IP devices, using an ECMP-enabled router, is through the use of SNAT pools. You can use SNAT pools to provide segmentation of traffic per application, as well scale the amount of connections per pool member. To use SNAT pools, you first create a unique SNAT pool for each device in the BIG-IP device group and then create an iRule that selects a SNAT pool per device.

With this SNAT pool configuration, the server pool members return traffic to the SNAT address or addresses of the originating BIG-IP cluster device instead of to the unique self IP address (as is the case with the SNAT Auto Map configuration).

This illustration shows an example of this configuration.

BIG-IP system clustering using ECMP with a SNAT pool

BIG-IP system clustering using ECMP with a SNAT pool

Creating a load balancing pool

Defining a route to the server

Creating SNAT pools

Creating a string data group

Creating an iRule for SNAT pool selection

Example of an iRule for SNAT pool selection

This example shows an iRule that selects the correct SNAT pool on a BIG-IP device in a device group.

Creating a virtual server

Confirming virtual address exclusion from a traffic group

Syncing the BIG-IP configuration to the device group

Configuring the BGP protocol

Perform this task when you want to configure the Border Gateway Protocol (BGP) dynamic routing protocol.

Sample BGP configuration using a SNAT pool

This example shows part of a Border Gateway Protocol (BGP) configuration on a BIG-IP device that accepts traffic from an upstream ECMP-enabled router. In this example, a static route or static routes are being used to distribute the unique SNAT pool addresses associated with each BIG-IP device.

| Configuration entry | Description |

|---|---|

| bgp router-id 20.1.1.2 | The bgp router-id value is the self IP address for the external VLAN on device Bigip_1. This address must be unique within the BGP configuration on each BIG-IP device in the device group. |

| redistribute static route-map f5-to-upstream | This entry ensures that the system advertises the SNAT pool address specified in the ip route entry. |

| neighbor 20.1.1.5 | The neighbor value is the IP address of the ECMP-enabled router. You must repeat the neighbor statement for each upstream router associated with a BIG-IP device. These neighbor statements are the same within the BGP configuration on each BIG-IP device in the device group. |

| ip route 30.1.1.51/32 | The ip route value is the translation address contained in SNAT pool snat-pool-1. Setting ip route to the SNAT pool address ensures that the system advertises this address. If the SNAT pool in your own configuration contains more than one translation address, you must include an ip route entry for each translation address in the SNAT pool. This address must be unique within the BGP configuration for each device in the device group. |

| ip prefix-list | The ip prefix-list entry specifies that the virtual IP address 30.1.1.10/32 and the SNAT address 30.1.1.51/32 are allowed to be advertised. |

| set ip next-hop 20.1.1.2 | The set ip next-hop value is the self IP address for the external VLAN on device Bigip_1. This next-hop address is used for traffic that is destined for the virtual IP address and potentially the specified SNAT pool address. This address must be unique within the BGP configuration on each BIG-IP device in the device group. |

Implementation result

After following the instructions in this implementation, you now have a three-member BIG-IP device group, where the same virtual server resides on each device, and each device is configured for dynamic routing using the Border Gateway Protocol (BGP). Also, the SNAT pool that you created on each device allows the upstream ECMP-enabled router to send traffic through port 179 on each BIG-IP device.

With this configuration, when application traffic comes through the ECMP-enabled router, the router can use an algorithm to select the best equal-cost path to any one of the BIG-IP devices in the device group. If any BIG-IP device becomes unavailable, the ECMP algorithm causes the ECMP-enabled router to forward that traffic to another device in the device group. Furthermore, each BIG-IP device has an administrative partition whose local traffic objects are synchronized to the devices in the Sync-Only device group. All devices in the device group use the default load balancing pool, which contains a single server on the ECMP router's internal network, to process application traffic.